ask-term

Animated CLI for multi-provider LLMs with persistent sessions — chat with GPT, Claude, Gemini or Ollama directly from your terminal.

Screenshots

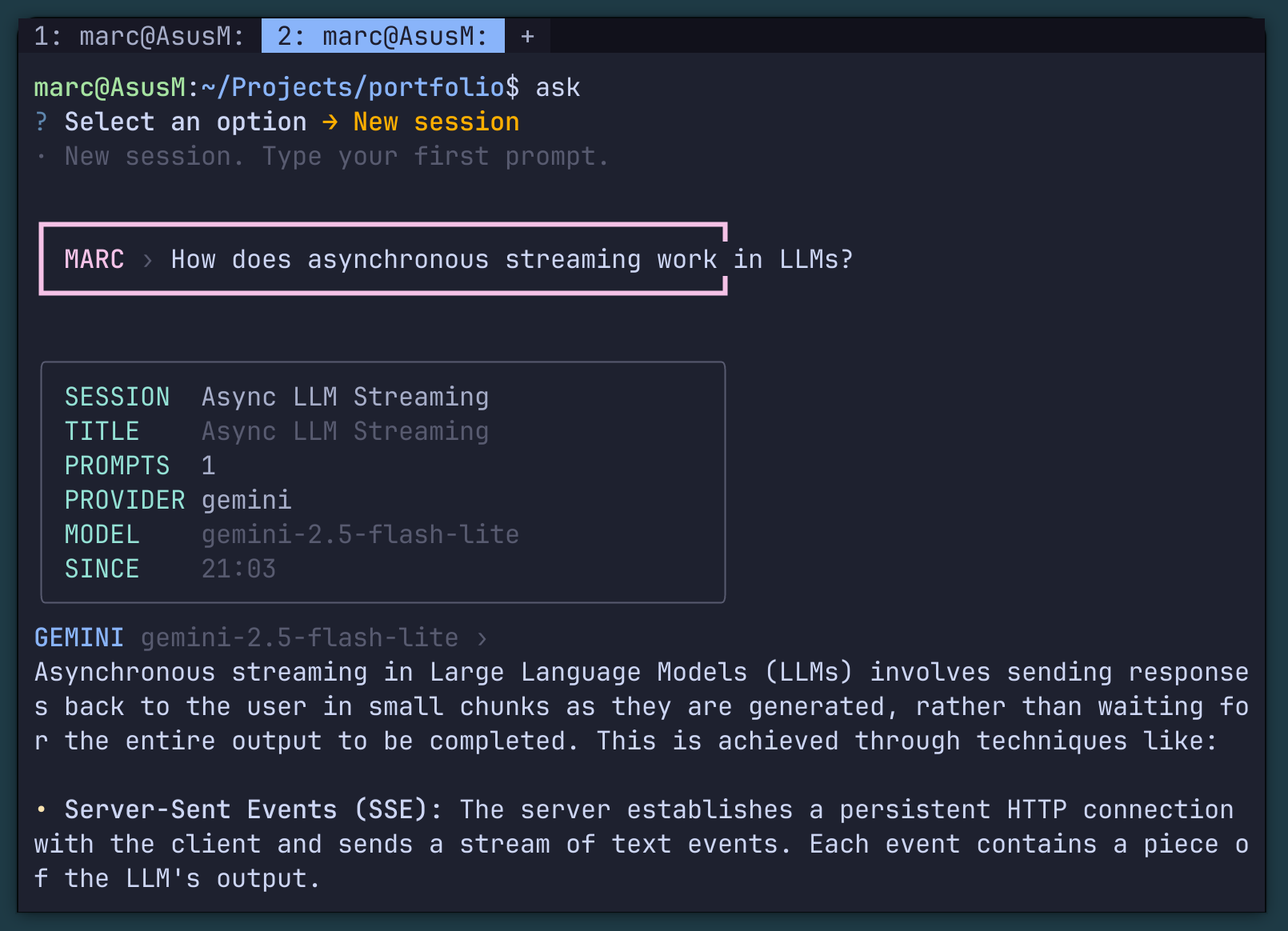

CLI chat interface starting a new session with Google Gemini

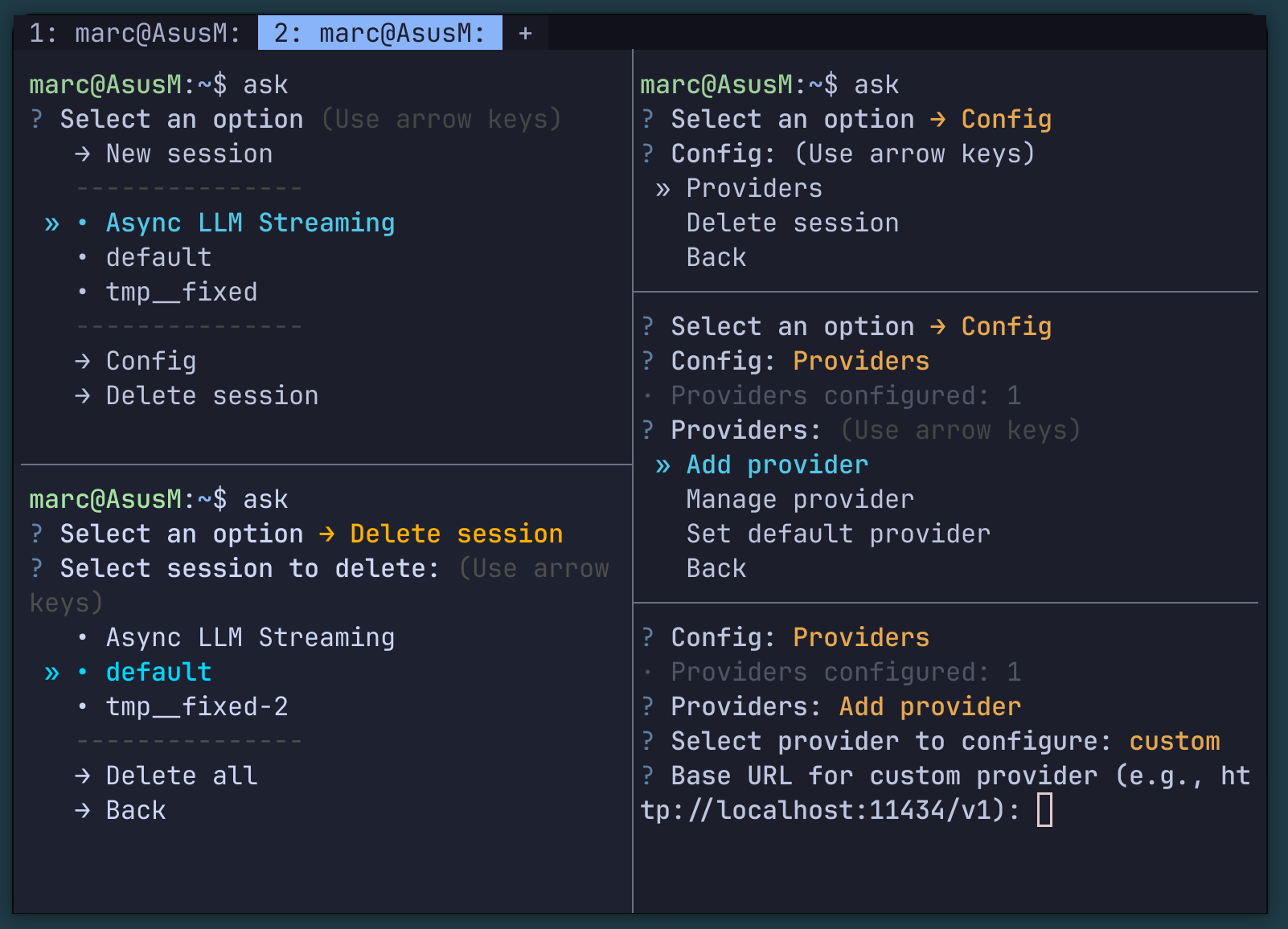

Session management system and multi-provider configuration

Overview

ask-term is a terminal-first interface for conversational AI. It provides an animated, interactive CLI experience for querying multiple LLM providers — including OpenAI, Anthropic Claude, Google Gemini, and local Ollama models — without leaving your terminal workflow. Sessions are persistent: your conversation history is stored locally so you can resume exactly where you left off. Switch between providers mid-session, compare responses, or keep a long-running coding conversation that survives terminal restarts. Status: Beta. This package may contain undocumented errors and incomplete features. Feedback and contributions are welcome.

Quick Start

Requires Python 3.9+. API keys for remote providers must be set as environment variables.

Architecture

ask-term is a single-command Python package that runs fully client-side. Provider Adapters: Each LLM provider (OpenAI, Claude, Gemini, Ollama) has a thin adapter implementing a common streaming interface. Adding new providers requires implementing just two methods: stream_chat() and list_models(). Session Store: Conversations are persisted as JSON files in ~/.ask-term/sessions/. Each session stores the full message history, provider, model, and metadata. Sessions are indexed by a short human-readable slug. Renderer: The terminal renderer uses ANSI escape codes for animation effects and streaming output. It works with any POSIX-compliant terminal emulator without additional dependencies. Entry Point: A single ask-term command drops you into an interactive session selector, or you can pass a prompt directly for one-shot queries.